SECURITY, ENCRYPTION AND SECRECY: TAKE A SURVEY AND FIND OUT WHERE YOU STAND

| March 25, 2016

I’ve been thinking about this whole digital security and encryption mess that’s been in the headlines recently. As an interested citizen (and absolute amateur and non-expert), I’m trying to parse the various sides of this issue. All sides attempt to present their cases as relatively simple and absolute, but there is so much recent history and context at work here that I sense that nothing is as simple as it’s portrayed as being.

The FBI wants access to a terrorist’s phone (which they accidentally locked themselves!), presumably to see if there are others they communicated with who may be contemplating future actions, and Apple is making a public case about not giving them that access/key—claiming that by giving them access they’d have access to all Apple phones. That’s how this issue has been framed.

The two sides of the issue taken by these parties are being painted by them as very black and white: Apple says it’s protecting its customers’ private information and the FBI says it needs the data to save lives from other potential terrorist attacks. I think that some of this is posturing, drama and theatrics (by both sides) that threatens to obscure how complex this issue is; there are many nuances here when you take it apart, but what we get, especially from from online media, is ideology, marketing and shouting. I think that both sides have valid points, but it also seems to me that both sides are being unrealistic and somewhat hypocritical. Is it possible to weigh their arguments and see what makes sense?

I’m going to try and take the arguments apart in my own amateurish way and at the end of this post we have added a survey so we can see what the consensus seems to be on this subject, beyond just this one incident.

Encryption and the Internet

The argument for encryption goes much further than this one phone, as recent Turing Award winners Whitfield Diffie and Martin Hellman have pointed out—and these two should know, as they invented the encryption technologies that make internet commerce and semi-secure communication as we know it possible. Back then the government wasn’t too keen on the widespread adoption of their work, as it allowed encryption to spread into the private sector. Today, Hellman warns that allowing the FBI access to the phone in question would set an international precedent and may likely lead to similar requests from less democratic governments—which could have irrevocable consequences. You can read more on Diffie and Hellman’s point of view in The Guardian article, "Turing Award goes to cryptographers, who are backing Apple in FBI war."

(As an aside, Diffie is very smart and cool—he and his wife are using his $500,000 share of the Turing Award money to publish a book about what they’ve learned from 49 years of marriage and how it can be applied to making the world a more peaceful place—and, even though he had a hand in the gestation of PowerPoint, we won’t hold that against him.)

Snail mail set a privacy precedent—it has long been against the law to open someone else’s mail. Before the internet, money and all sorts of private information was sent via regular mail and it was accepted that no one except the recipient was allowed to look at it. And well before that wax seals were traditionally used as a means of securing letters.

Security and privacy are essential and valuable but, in my opinion, Apple could have quietly cooperated on just that one phone if the FBI quietly gave it to them—they might have unlocked it themselves (NOT given out the key, which I suspect they do have in their possession, although they claim otherwise) and then given just the data to the FBI (NOT the phone or the key)—which could have been the end of the story. No backdoors opened to the FBI or NSA or whoever wanders in there. (Maybe.)

But instead, and in many ways justifiably so, Apple decided to blow up the issue and make a public case about general internet security...and very much about their own reputation. No surprise that many other tech companies jumped on this bandwagon, claiming that they are all about protecting their customers. Hmm. But is that what it’s really about?

Reputation is all-important in the security arena. Security depends on trust and without it no one would buy things online or, really, anywhere else. A lot of trust goes into a purchase or any economic exchange: you trust that you won’t be overcharged, you trust that your credit card information won’t be passed on to whoever, you trust that the product will be delivered as advertised. Similarly, the finance industry, the advertising industry—all of them—trust that the information and data they share between partners is secure. Without this security and trust, the entire world of online-based business transactions would fall apart. Maybe it will anyway, but there is a very large house of cards built on trust, so there is an incentive to keep it standing.

However, despite how critical security is to the digital world, few of these tech companies sounded alarm when the NSA quietly asked them quite a few years ago to make folks’ data available to the government...perhaps because they thought no one would ever know. The data big tech collected (and still collect by the bucketload!) allows them to know way more about us than the FBI or the NSA—that’s why those government organizations wanted it. As Terry Gross pointed out in her Fresh Air interview with journalist Fred Kaplan (which you can listen to here), these companies know our IDs, social security numbers, where we shop, who we are in contact with, what we take photos of, our likes and dislikes, and so on. It’s no surprise that collecting such personal information has become increasingly integral to the big tech business models over the past 10 years.

Here’s a typical point of view on this perceived hypocrisy from a citizen:

Privacy? People post personal details of their daily life to Facebook, Twitter and YouTube; our Internet searches are tracked and sold; the government tracks the senders and recipients of email; it can ask libraries for a list of books we’ve checked out; we are watched by an ever-increasing array of surveillance cameras; with a judge’s warrant, the police can track our movements, tap our phones, even come into our homes and rummage through every drawer. Yet when the F.B.I., with a warrant, asks to look into the phone of a dead terrorist, Apple puts up a furious row.

The fact that Apple has won over much of the public—the same public that seems perfectly happy to have its daily activity, interests, opinions and contacts accessible to all the world—as well as most privacy advocates, who seem to have done little to stem the increasing corporate invasion of privacy, makes Apple’s efforts to block access to Syed Farook’s phone look like the marketing exercise that the Justice Department claims it is. And like most of Apple’s marketing campaigns, it is a highly successful one. We have become a nation of sheep, and Silicon Valley is our shepherd.

The above was written in response to the The New York Times article "Apple Battle Strikes Nerve" (the online version of the article can be read here) by Samuel Reifler. He has a point, but so do the tech corporations—we do need to feel that our data is secure for the economy or the internet to work...and feel that the government isn’t spying on all of us.

To be clear, I find it heinous that governments are spying on citizens without warrant or justification and at the same time I find it very disturbing that many online business models track everything we do as well.

Edward Snowden’s leaks put an end to the mythical belief that our personal information was in fact secure. Not only was it made clear that the NSA and others were hoovering up our data, but that internet service providers (ISPs), tech companies and cable providers were handing it to them with no public grumbling—or even public knowledge. The reputations of big tech and ISPs were damaged by the Snowden revelations, or at least made subject to public scrutiny and suspicion. Only ONE small company actually bucked the NSA—the encrypted webmail service Lavabit. It’s been proposed that the government went after them because Snowden had used them to keep some of his communications secure. Rather than hand over their users’ data to the government, Lavabit nobly closed up shop and went out of business. (You can read more about Lavabit founder Ladar Levison’s decision to do so in this article from The Guardian.) Did anyone else who is moaning now do that? There is rumor afoot that Apple employees would rather quit than assist the government in unlocking Syed Farook’s phone (per this New York Times article)—but that’s nowadays, with their reputation and the whole of the public’s trust in online business at stake. Post-Snowden leaks, quite a few European companies had second thoughts about using US-based cloud services—a big source of revenue for those companies. Wavering trust in US-based companies AND the NSA was putting that house of cards in jeopardy.

So...how do tech firms and ISPs re-establish those broken reputations? They make a stink that they are all about securing customers’ data—data that they acquire in exchange for free web searching, free email, etc., when we click "agree". There’s a little hypocrisy at work here on behalf of the general public as Reifler pointed out: we don’t want governments having access to what we do (which makes a lot of sense), yet we give that same access to tech companies. How did these companies get to be so trusted? By making cool gadgets and offering free web search?

What’s at stake here?

So, what’s at stake for these companies? The Snowden leaks were, in my opinion, a time of crisis for web business. The think tank Information Technology & Innovation Foundation initially estimated that the U.S. computing industry could have lost up to $35 billion as a consequence of the loss of trust based on the Snowden revelations. And, more recently, The New York Times pointed out that "a Pew Research poll in 2014 found more than 90 percent of those surveyed felt that consumers had lost control over how their personal information was collected and used by companies." Today tech companies know that customers would depart in droves—and consequently collapse the whole online business structure—if they perceive their information and data to be insecure. So, in order to keep up appearances, these companies must enact a theater of security.

As I mentioned above, the idea of security and privacy has its merits. But the folks who seem to be advocating for it also seem to be the ones hoovering up our data, which makes this issue so complicated. The tech companies want security, but at the same time they don't want our data to be kept secure from them. So, in order to establish a reputation as being secure, these companies MUST publicly support encryption—but meanwhile their user agreements in fact run counter to privacy in many ways. Facebook, especially, has a history fraught with privacy problems.

These giant corporations also argue that they use the data they collect to our benefit. Perhaps, most obviously, there’s the convenience factor—personalized recommendations, search efficiency, predictive search. We like all that convenience.

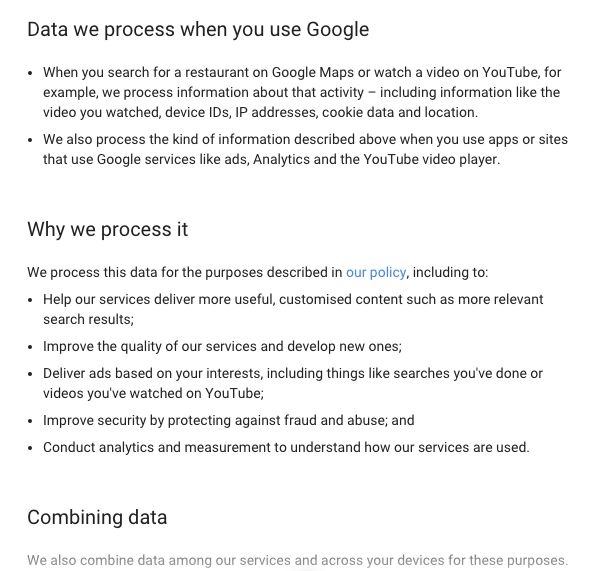

Here’s a screenshot of just ONE PAGE of Google "agreements" that have popped up recently which lists some reasons why they collect data:

There’s also the argument that analysis resulting from data collection can do a lot of good in the world, though little is heard on this front right now as a the tech companies make their encryption case—to do so might remind everyone that they’re collecting data, too. As the Engineering Social Systems program at Harvard observes:

Big data constitute a huge opportunity. Never before have researchers had the opportunity to mine such a wealth of information that promises to provide insights about the complex behavior of human societies. While the privacy implications of this data should not be understated, we aim to show that these types of massive datasets can be leveraged to better serve both the billions of people who generate the data, and ultimately the societies in which they live.

For example, some of the massive amount of data that is being collected is enabling researchers at Harvard to predict food shortages in Uganda, create models that explain student learning outcomes, and understand how communication patterns reflect poverty. Some medical and even judicial decisions have been proved to get a higher percentage of beneficial results when based on data and algorithms rather than when made by humans as this magazine article points out: "Data-driven algorithms have proved superior to human judges and juries in predicting recidivism, and it’s virtually certain that computer analysis could judge parole applications more effectively, and certainly less capriciously, than human judges do." I don’t know how soon we’ll be willing to accede to machines making decisions about our health or whether or not a criminal should be paroled, but the studies do show that they avoid the inherent biases of those nasty humans!

And then there are the "beneficial effects" of data collection that business loves—targeted marketing and all the rest. Some of us might be ambivalent about those "benefits", but most don’t seem to mind it...yet...and we often like the uncanny recommendations. The applications of these intrusions are made to seem benign, but that’s not always the case. In fact, hiring employees, granting loans and mortgages, etc., based on data that has been run through biased algorithms are far from benign. Will a black person who lives in a certain neighborhood still have issues getting a loan even if it’s not being processed by a bigot but rather determined by a biased algorithm? The answer, sadly, is yes, as the algorithm looks at statistical averages and not at individuals.

That said, I believe encryption and privacy is necessary—and it’s about time. It’s a good thing that tech folks are standing up to government snooping, but there may be exceptions, like when we permit legally-granted search warrants.

The Government’s Position

One can see that being able to hide transactions from the government means that citizens are safe from unfair politically-based abuse, as happens in dictatorships and in less-than-transparent societies. But the government’s point of view is that by keeping activities secret and private, all sorts of nefarious activities will inevitably flourish—kiddy porn, drugs, arms sales, terrorism, hate crimes, bullying...the list goes on and on. Shouldn’t the government be able to track such activities, hypothetically at least? Do we want all of that to be absolutely beyond reach of law enforcement?

Openness, it has been proven, goes hand in hand with democracy. It’s a bit strange and hypocritical to have that openness espoused by the U.S.A., home of the NSA and CIA Black Sites, but I do think that there is still some reason left in the government. In a recent New Yorker article, Tom Malinowski, the Assistant Secretary of State for Democracy, Human Rights, and Labor, is quoted as having said, “One of our articles of faith here—backed up by evidence, I hope—is that open societies are a bulwark against extremism…” Transparency has all sorts of positive repercussions—states with open and functioning democracies tend to do better economically, too. So, agreed, we need to be able to NOT allow all sorts of secret activities to go on within our government...and maybe in the private sector, too.

But for the government to be on the lookout for sexual predators, drugs, guns and all sorts of other illegal activities, one can see that they would need some search and surveillance capabilities. And, sadly, their mass collection of information on citizens does not seem to have been a sure-fire protection from terrorists and extremists (agencies will claim otherwise, I know), which is why they are arguing that in order to do better they need MORE access to our data rather than have it be curtailed.

Others have pointed out that to trust the government not to abuse such access is naive, especially in the wake of the Snowden leaks. We KNOW that the government has already abused these privileges when it thought no one was watching, so why legitimize such access now?

As defenders of encryption point out, we have confirmation that the government has spied on civil rights activists in the past, as well as many other organizations that are far from being terrorists. The government’s spying and dirty tricks are often ideologically based. Do we want to give them more tools to be able to do this? Doesn’t that endanger legitimate dissent, discussion and eventual reform? And in turn endanger what’s left of our democracy?

Snowden has said recently that the FBI can access that phone if they want to (see The Guardian’s article), and I am inclined to believe him—that was his job, after all. So all of this, then, is mere posturing by the FBI in hopes of using the terrorist dilemma a means to gain a key. And if that’s true, it puts the FBI in the same reputation-building position as the tech companies—only the goal of the FBI isn’t securing the trust of customers, it’s justifying the creation of a backdoor in the name of national security. And, as of March 22nd, there is a backdoor the FBI says it may even be able to open without the help of Apple—a development which, The Guardian noted, may turn the tables a bit:

if investigators figure out a way to hack into the device without Apple’s help, are they obligated to show Apple the security flaw they used to get inside? Attorneys for Apple, which almost assuredly would then patch such a flaw, said they would demand the government share their methods if they successfully get inside the phone.

Recent news items seem to confirm this—the FBI hired a hacker (the FBI are notoriously incompetent as revealed during this sad but hilarious Q&A between the FBI director and Congressman Issa, who knows a thing or two about tech), and this hacker cooperated with them rather than showing Apple where the security hole is. (Why would the hacker not go to Apple? This article proposes it’s because Apple doesn’t pay for tips as other tech companies do.)

But despite the Keystone Cop-like behavior of the FBI, do we at the same time trust these giant, all-encompassing, all-seeing tech corporations with the same information? These corporations already have a lot of data on us and can access it whenever they want. It’s a big business. One company that does this, Acxiom, is referred to as “the biggest company you’ve never heard of”. As described on their Wikipedia page:

Acxiom collects, analyzes, and parses customer and business information for clients, helping them to target advertising campaigns, score leads, and more. Its client base in the United States consists primarily of companies in the financial services, insurance, information services, direct marketing, media, retail, consumer packaged goods, technology, automotive, healthcare, travel, and telecommunications industries, and the government sector.

They often sell information about us to others. Who says a giant corporation has any interest in our rights, democracy, or much else beyond themselves?

So, it seems that both sides are playing a game here—both somewhat hypocritically and both backed by some very reasonable arguments, too.

A Net is Full of Holes

If we zoom out from this squabble, a larger question looms about the nature of the web itself. I have my own doubts that the internet can ever be secure, due to its nature and architecture. It was built on sharing and openness—though encryption is simultaneously essential. There’s the conundrum right there—how to have both. Passwords aren't the real issue, as hackers can gain access by phishing (Diffie and Tim Berners Lee will know way more about all that). As The New York Times recently cited, “An Apple spokeswoman referred to an editorial by Craig Federighi, the company’s senior vice president for software engineering, in which he wrote, ‘Security is an endless race—one that you can lead but never decisively win.’” So, NEVER 100% secure. What percentage, then, would make us feel happy and relatively secure? It’s about feelings and trust, not absolutes.

Knit your own security!

Does security equal secrecy? Are they the same thing? If security is possible and if encryption works, then the resulting blanket of secrecy can cover so much—our private lives, our business dealings and creative IP-related endeavors, as well as Wall Street, drug dealing, black ops, opportunistic finance, child porn, hate and xenophobia, military adventures and activities, surveillance of private citizens, tech, medical records, legal records, and so, so much more. Do we want all of that able to be hidden under the cloak of secure encryption? Some things we might say "yes" and some maybe not so much.

We might assume that "of course one's legal or medical files must remain private" or that "development of new products and scientific ideas needs to be allowed to fail and stumble in private in order to get the kinks out"—but where does one draw the privacy line? One could argue that the diplomatic process does, in fact, also require some secrecy. It’s been pointed out to me that the agreement between Kennedy and Khrushchev over the Cuban Missile Crisis would have fallen apart if it were viewed in progress by the press and public. Hard to say—that blanket over a diplomatic negotiation can also be used to hide some pretty nasty handshakes.

What do I think?

I maintain that secrecy automatically leads to abuses. Always has. It's not even a question—it's inevitable. We need security and privacy to exist in an online world, but the things that accompany absolute unlimited secrecy will inevitably lead to chicanery. Keeping business and personal exchanges safe from the prying eyes of any government is a good thing, as are properly vetted and transparent search warrants like there are for house searches (an exception to privacy that we generally tend to accept)—but blanket snooping as was instituted by our government post-9/11 (and other governments, too) are not the way to do this.

Where do YOU stand?

OK, below a survey we can take for fun to see where we stand on these ideas and perhaps to use as a place to start becoming less absolutist in our principles (use this link to access the survey if you are on a mobile device or tablet). We will post the results of the questionnaire here and email them to the website's subscribers on April 11th, 2016 (so be sure to submit your answers before then!). Your answers will be anonymous and will not be displayed as individual responses but rather as a composite graph that illustrates how everyone responded to the different questions and tweaks.

DB

New York City